So before I get underway, this article is about Microchip PIC micro-controllers. Please understand – I don’t want to get into a flamewars with Atmel, MPS430 or other fanboys, my personal preference has been PIC’s for many years, thats a statement of personal preference, I am not saying that PIC’s are better than anything else I am just saying I like them better – please don’t waste your time trying to convince me otherwise, I have evaluated most other platforms numerous times already – so before you suggest I should look at your XYZ platform of choice, please save your time – the odds are good I have already done so and I am still using PIC’s

OK, full RANT mode enabled…

As I understand it Microchip are in the silicon chip business selling micro-controllers – actually Microchip make some really awesome parts and I am guessing here but I suspect they probably want to sell lots and lots of those awesome parts right? So why do they suppress their developer community with crippled compiler tool software unless you pay large $$$, after all, as a silicon maker they *NEED* to provide tools to make it viable for a developer community to use their parts? It is ridiculous charging for the tools – its not like you can buy Microchip tools and then use them for developing on other platforms so the value of these tools is entirely intrinsic to Microchip’s own value proposition. It might work if you have the whole market wrapped up but the micro-controller market is awash with other great parts and free un-crippled tools.

A real positive step forward for Microchip was with the introduction of MPLAB-X IDE – while not perfect its infinitely superior to the now discontinued MPLAB8 and older IDE’s which were, err, laughable by other comparable tools. The MPLAB-X IDE has a lot going for it, it runs on multiple platforms (Windows, Mac and Linux) and it mostly works very well. I have been a user of MPLAB-X from day one and while the migration was a bit of a pain and the earlier versions had a few odd quirks, every update of the IDE has just gotten better and better – I make software products in my day job so I know what it takes, and to the product manager(s) and team that developed the MPLAB-X IDE I salute you for a job well done.

Now of course the IDE alone is not enough, you also need a good compiler too – and for the Microchip parts there are now basically three compilers, XC8, XC16 and XC32. These compilers as I understand it are based on the HI-TECH PRO compilers that Microchip acquired when they bought HI-TECH in 2009. Since that acquisition they have been slowly consolidating the compilers and obsoleting the old MPLAB C compilers. Microchip getting these tools is a very good thing because they needed something better than they had – but they had to buy the Australian company HI-TECH Software to get them, it would appear they could not develop these themselves so acquiring them would be the logical thing to do. I can only speculate that the purchase of HI-TECH was most likely justified both internally and/or to investors, on the promise of generating incremental revenues from the tools, otherwise why bother buying them right? any sound investment would be made on the basis of being backed by a revenue plan and the easiest way to do that would be to say, in the next X years we can sell Y number of compilers for Z dollars and show a return on investment. Can you imagine an investor saying yes to “Lets by HI-TECH for $20M (I just made that number up) so we can refocus their efforts on Microchip parts only and then give these really great compilers and libraries away!”, any sensible investor or finance person would probably ask the question “why would we do that?” or “where is the return that justifies the investment”. But, was expanding revenue the *real* reason for Microchip buying HI-TECH or was there an undercurrent of need to have the quality the HI-TECH compilers offered over the Microchip Compilers, it was pretty clear that Microchip themselves were way behind – but that storyline would not go down too well with investors, imagine suggesting “we need to buy HI-TECH because they are way ahead of us and we cannot compete”, and anyone looking at that from a financial point of view would probably not understand why having the tools was important without some financial rationale that shows on paper that an investment would yield a return.

Maybe Microchip bought HI-TECH as a strategic move to provide better tools for their parts but I am making the assumption there must have been some ROI commitment internally – why? because Microchip do have a very clear commercial strategy around their tools, they provide free compilers but they are crippled generating unoptimised code, in some cases the code generated has junk inserted, the optimised version simply removes this junk! I have also read somewhere that you can hack the setting to use different command-line options to re-optimise the produced code even on the free version because at the end of the day its just GCC behind the scenes. However, doing this may well revoke your licences right to use their libraries.

So then, are Microchip in the tools business? Absolutely not. In a letter from Microchip to its customers after the acquisition of HI-TECH [link] they stated that “we will focus our energies exclusively on Microchip related products” which meant dropping future development for tools for other non-Microchip parts that HI-TECH used to also focus on. As an independent provider HI-TECH could easily justify selling their tools for money, their value proposition was they provided compilers that were much better than the “below-par” compilers put out by Microchip, and being independent there is an implicit justification for them charging for the tools – and as a result the Microchip customers had an choice – they could buy the crappy compiler from Microchip or the could buy a far superior one from HI-TECH – it all makes perfect sense. You see, you could argue that HI-TECH only had market share in the first place because the Microchip tools were poor enough that there was a need for someone to fill a gap. Think about it, if Microchip had made the best tools from day one, then they would have had the market share and companies like HI-TECH would not have had a market opportunity – and as a result Microchip would not have been in the position where they felt compelled to buy HI-TECH in the first place to regain ground and possibly some credibility in the market. I would guess that Microchip’s early strategy included “let the partners/third parties make the tools, we will focus on silicon” which was probably OK at the time but the world moved on and suddenly compilers and tools became strategically important element to Microchip’s go-to-market execution.

OK, Microchip now own the HI-TECH compilers, so why should they not charge for them? HI-TECH did and customers after all were prepared to pay for them so why should Microchip now not charge for them? Well I think there is a very good reason – Microchip NEED to make tools to enable the EE community to use their parts in designs to ensure they get used in products that go to market. As a separate company, HI-TECH were competing with Microchips compilers, but now Microchip own the HI-TECH compilers so their is no competition and if we agree that Microchip *MUST* make compilers to support their parts, then they cannot really justify selling them in the same way as HI-TECH was able to as an independent company – this is especially true given the fact that Microchip decided to obsolete their own compilers that the HI-TECH ones previously competed with – no doubt they have done this in part at least to reduce the cost of (and perhaps reduced the team size needed in) maintaining two lots of code and most likely to provide their existing customers of the old compilers with a solution that solved those outstanding “old compiler” issues. So they end up adopting a model to give away limited free editions and sell the unrestricted versions to those customers that are willing to pay for them. On the face of it thats a reasonable strategy – but it alienates the very people they need to be passionate about their micro-controller products.

I have no idea what revenues Microchip derives from their compiler tools – I can speculate that their main revenue is from the sale of silicon and that probably makes the tools revenue look insignificant. Add to that fact the undesirable costs in time and effort in maintaining and administering the licences versions, dealing with those “my licence does not work” or “I have changed my network card and now the licence is invalid” or “I need to upgrade from this and downgrade from that” support questions and so on….this must be a drain on the company, the energy that must be going into making the compiler tools a commercial subsidiary must be distracting to the core business at the very least.

Microchip surely want as many people designing their parts into products as possible, but the model they have alienates individual developers and this matters because even on huge projects with big budgets the developers and engineers will have a lot of say in the BOM and preferred parts. Any good engineer is going to use parts that they know (and perhaps even Love) and any effective manager is going to go with the hearts and minds of their engineers, thats how you get the best out of your teams. The idea that big budget projects will not care about spending $1000 for a tool is flawed, they will care more than you think. For Microchip to charge for their compilers and libraries its just another barrier to entry – and that matters a lot.

So where is the evidence that open and free tools matter – well, lets have a look at Arduino – you cannot help but notice that the solution to almost every project that needs a micro-controller these days seems to be solved with an Arduino! and that platform has been built around Atmel parts, not Microchip parts. What happened here? With the Microchip parts you have much more choice and the on-board peripherals are generally broader in scope with more options and capabilities, and for the kinds of things that Arduino’s get used for, Microchip parts should have been a more obvious choice, but Atmel parts were used instead – why was that?

The success of the Arduino platform is undeniable – if you put Arduino in your latest development product name its pretty much a foregone conclusion that you are going to sell it – just look at the frenzy amongst the component distributors and the Chinese dev board makers who are all getting in on the Arduino act, and why is this? well the Arduino platform has made micro-controllers accessible to the masses, and I don’t mean made them easy to buy, I mean made them easy to use for people that would otherwise not be able to set up and use a complex development environment, toolset and language, and the Arduino designers also removed the need to have a special programmer/debugger tool, a simple USB port and a boot-loader means that with just a board and a USB cable and a simple development environment you are up and running which is really excellent. You are not going to do real-time data processing or high speed control systems with an Arduino because of its hardware abstraction but for many other things the Arduino is more than good enough, its only a matter of time before Arduino code and architectures start making it into commercial products if they have not already done so. There is no doubt that the success of the Arduino platform has had a positive impact on Atmel’s sales and revenues.

I think I was pretty close to the mark when I was thinking that because Atmel used an open toolchain based on the GCC compiler and open source libraries, when the team who developed the Arduino project started work on their Arduino programming language, having the toolchain open and accessible probably drove their adoption decision – that was pure speculation on my part and that was bugging me so I thought I would try to find out more.

Now this is the part where the product team, executives and the board at Microchip should pay very close attention. I made contact with David Cuartielles who is Assistant Professor at Malmo University in Sweden, but more relevant here is that he is one of the Co-founders of the original Arduino project. I wrote David and asked him…

“I am curious to know what drove the adoption of the Atmel micro controllers for the Arduino platform? I ask that in the context of knowing PIC micro controllers and wondering with the rich on-board peripherals of the PIC family which would have been useful in the Arduino platform why you chose Atmel devices.”

David was very gracious and responded within a couple of hours. He responded with the following statement:

“The decision was simple, despite the fact that -back in 2005- there was no Atmel chip with USB on board unlike the 18F family from Microchip that had native USB on through hole chips, the Atmel compiler was not only free, but open source, what allowed migrating it to all main operating systems at no expense. Cross-platform cross-compilation was key for us, much more than on board peripherals.”

So on that response, Microchip should pay very close attention. The 18F PDIP series Microcontroller with onboard USB was the obvious choice for the Arduino platform and had the tooling strategy been right the entire Arduino movement today could well have been powered by Microchip parts instead of Atmel parts – imagine what that would have done for Microchip’s silicon sales!!! The executive team at Microchip need to learn from this, the availability of tools and the enablement of your developer community matters – a lot, in fact a lot more than your commercial strategy around your tooling would suggests you might believe.

I also found this video of Bob Martin at Atmel stating pretty much the same thing.

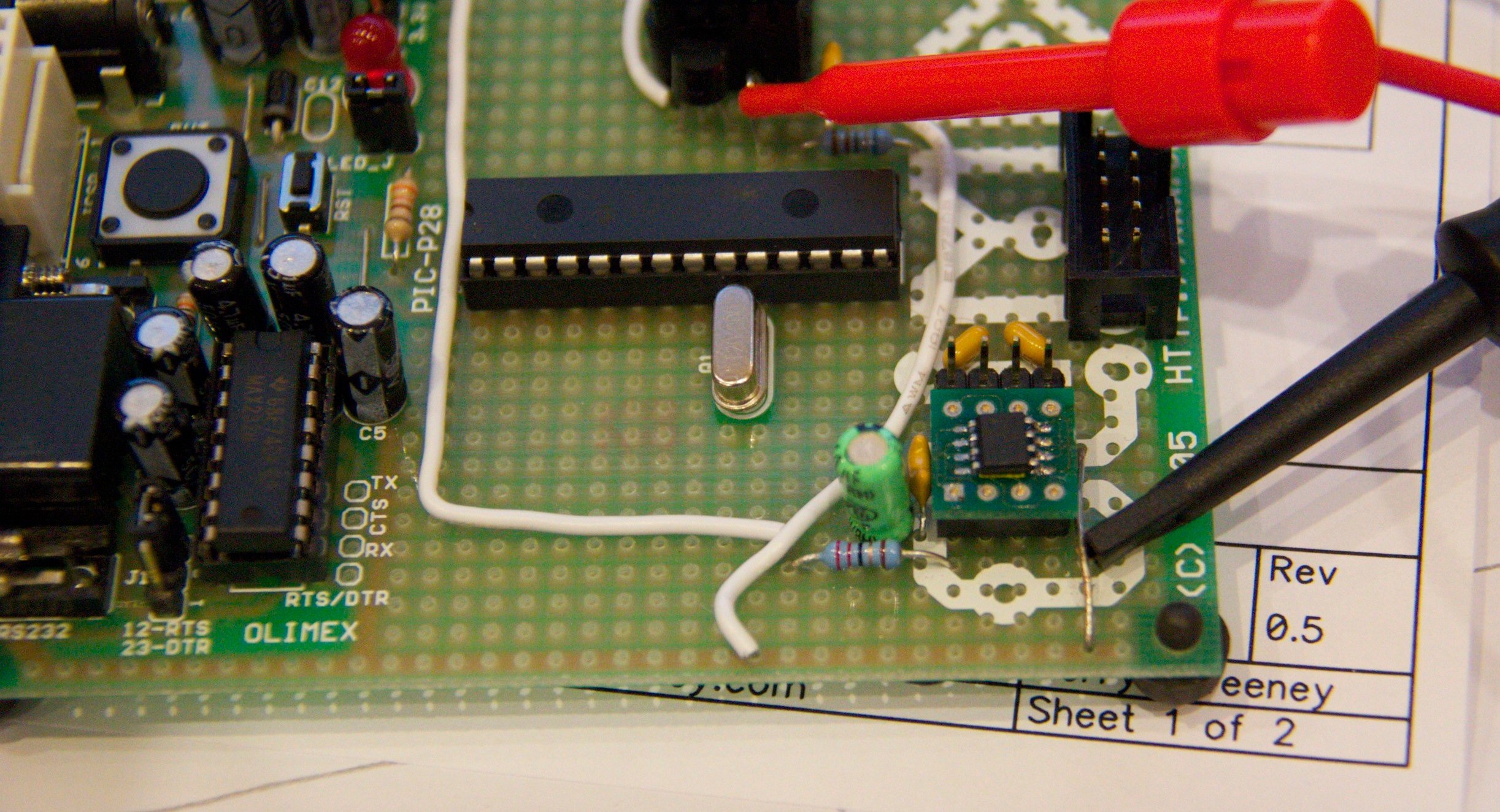

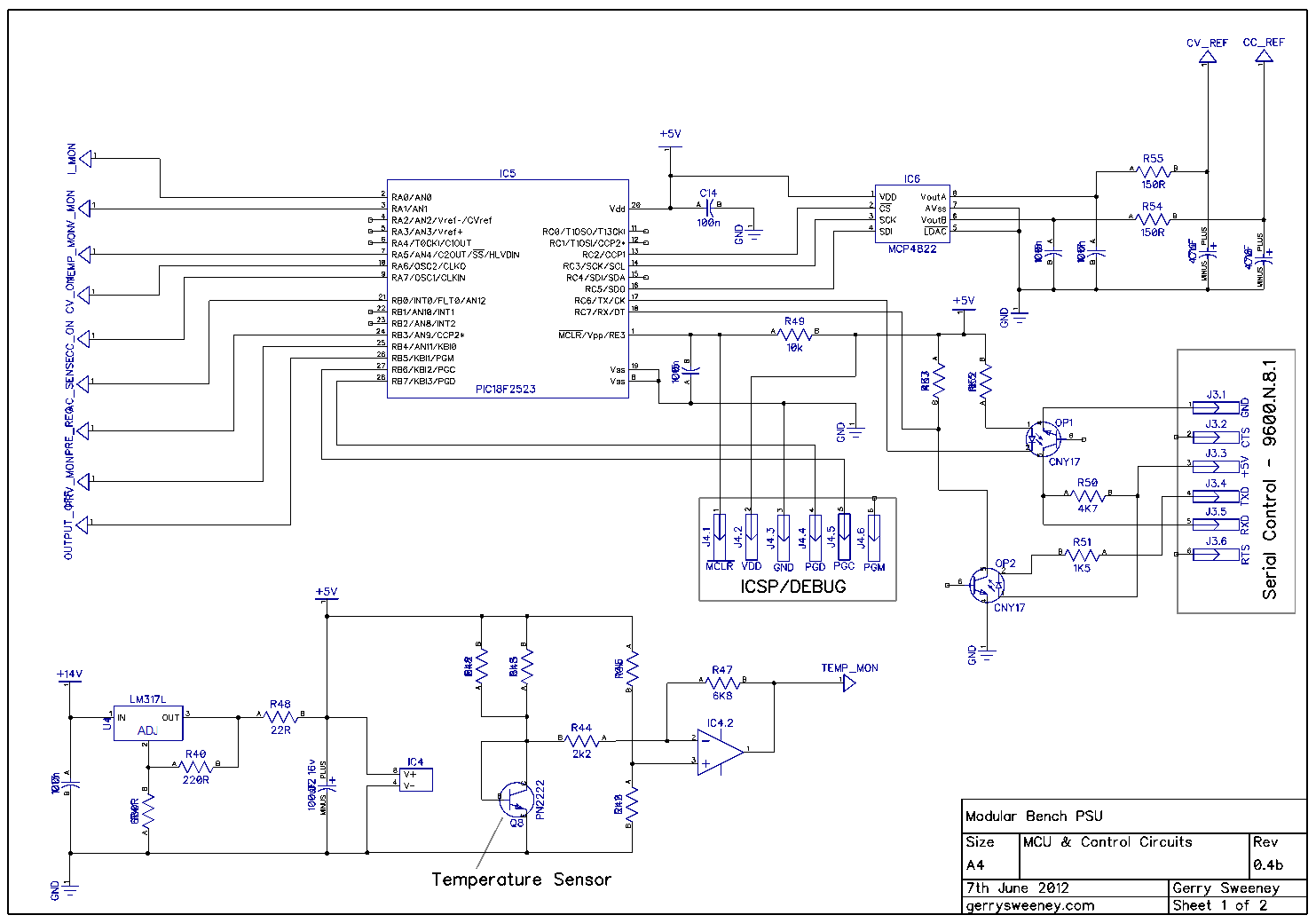

So back to Microchip – here is a practical example of what I mean. In a project I am working on using a PIC32 I thought it would be nice to structure some of the code using C++, but I found that in order to use the C++ features of the free XC32 compiler I have to install a “free licence” which requires me to not only register my personal details on the Microchip web site but also to tie this request to the MAC address of my computer – so I am suspicious, there is only one purpose for developing a mechanism like this and thats to control access to certain functions for commercial reasons. I read on a thread in the Microchip forums that this is apparently to allow Microchip management to assess the demand for C++ so they can decide to put more resources into development of C++ features if the demand is there – a stock corporate response – and I for one don’t buy it. I would say its more likely that Microchip want to assess a commercial opportunity and collect contact information for the same reasons, perhaps even more likely for marketing to feed demand for a sales pipeline. Whats worse is after following all the instructions I am still getting compiler errors stating that my C++ licence has not been activated. Posting a request for help on the Microchip forum has now resolved this but it was painful, it should have just worked – way to go Microchip. Now because of the optimisation issues, I am now sitting here wondering if I should take the initiative and start looking at a Cortex M3 or and Atmel AVR32 – I wonder how many thoughts like this are invoked in Microchip customers/developers because of stupid tooling issues like this?

You do not need market research to know that for 32-bit Micro-controllers C++ is a must-have option in the competitive landscape – not having it leaves Microchip behind the curve again – this is not an extra PRO feature, it should be there from the off – what are you guys thinking! This position is made even worse by the fact that the XC32 toolchain is built broadly around GCC which is already a very good C++ compiler – why restrict access to this capability in your build tool. If Microchip wanted to know how many people want to make use of the C++ compiler all they need to do is ask the community, or perform a simple call-home on installation or even just apply common sense, all routes will lead to the same answer – none of which involve collecting peoples personal information for marketing purposes. The whole approach is retarded.

This also begs another question too – if Microchip are building their compilers around GCC which is an open source GPL project, how are Microchip justifying charging a licence fee for un-crippled versions of their compiler – the terms of the GPL require that Microchip make the source code available for the compilers and any derived works, so any crippling that is being added could simply be removed by downloading the source code and removing the crippling behaviour. It is clear however that Microchip make all the headers proprietary and non-sharable effectively closing out any competitor open source projects – thats a very carefully crafted closed source strategy that takes full advantage of open source initiatives such as GCC, not technically in breach of the GPL license terms but its a one-sided grab from the open source community and its not playing nice – bad Microchip…..

Late in the game, Microchip are trying to work their way into the Arduino customer base by supporting the chipKIT initiative. It is rumoured that the chipKIT initiative was actually started by Microchip to fight their way back into the Arduino space to take advantage of the buzz and demand for Arduino based tools – no evidence to back it up but seems likely. Microchip and Digilent have brought out a 32bit PIC32 based solution, two boards called UNO32 and Max32, both positioned as “a 32bit solution for the Arduino community” by Microchip, these are meant to be software and hardware compatible although there are the inevitable incompatibilities for both shields and software – oddly they are priced to be slightly cheaper than their Arduino counterparts – funny that 🙂

Here is and interview with Ian Lesnet at dangerousprototypes.com and the team at Microchip talking about the introduction of the Microchip based Arduino compatible solution.

There is also a great follow-up article with lots of community comment all basically saying the same thing.

http://dangerousprototypes.com/2011/08/30/editorial-our-friend-microchip-and-open-source/

Microchip have a real up hill battle in this space with the ARM Cortex M3 based Arduino Due bringing an *official* 32bit solution to the Arduino community. Despite having an alleged “open source” compiler for the chipKIT called the “chipKIT compiler” its still riddled with closed-source bits and despite the UNO32 and MAX32 heavily advertising features like Ethernet and USB (which Microchip are known for great hardware and software implementations) these are only available on the UNO32 and MAX32 platforms if you revert back to Microchip’s proprietary tools and libraries – so the advertised benefits and the actual benefits to the Arduino community are different – and thats smelly too….

OK, so I am nearing the end of my rant and I am clearly complaining about the crippling of Microchip provided compilers, but I genuinely believe that Microchip could and should do better and for some reason, perhaps there is a little brand loyalty, I actually care, I like the company and the parts and the tools they make.

One of my own pet hates in business is to listen to someone rant on about how bad something is without having any suggestions for improvement, so for what its worth, if I were in charge of product strategy at Microchip I would want to do the following: –

- I would hand the compilers over to the team or the person in charge that built the MPLAB-X IDE and would have *ALL* compilers pre-configured and installed with the IDE right out of the box. This would remove the need for users to set up individual compilers and settings. Note to Microchip: IDE stands for Integrated Development ENVIRONMENT, and any development ENVIRONMENT is incomplete in the absence of a compiler

- I would remove all crippling features from all compilers so that all developers have access to build great software for projects based on Microchip parts

- I would charge for Priority Support, probably at comparable rates that I currently charge for the PRO editions of the compilers – this way those companies with big budgets can pay for the support they need and get additional value from Microchip tools, while Microchip can derive its desired PRO level revenue stream without crippling its developer community.

- I would provide all source code to all libraries, this is absolutely a must for environments developing critical applications for Medical, Defence and other systems that require full audit and code review capability. By not doing so you are restricting potential market adoption.

- I would stop considering the compilers as a product revenue stream, I would move the development of them to a “cost of sale” line on the P&L, set a budget that would keep you ahead of the curve and put them under the broader marketing or sales support banner – they are there to help sell silicon – good tools will create competitive advantage – I would have the tools developers move completely away from focusing on commercial issues and get them 100% focused on making the tools better than anything else out there.

- I would use my new found open strategy for tooling and both contribute to, and fight for my share of the now huge Arduino market. The chipKIT initiative is a start but its very hard to make progress unless the tool and library strategy is addressed

Of course this is all my opinion with speculation and assumptions thrown in, but there is some real evidence too. – I felt strongly enough about it to put this blog post together, I really like Microchip parts and I can even live with the stupid strategy they seem to be pursuing with their tools but I can’t help feeling I would like to see Microchip doing better and taking charge in a market that they seem be losing grip on – there was a time a few years ago where programming a Micro-controller and PIC were synonymous – not any more, it would seem that Arduino now has that. All of that said, I am not saying that I do not want to see the Arduino team and product to continue to prosper, I do, the founders and supporters of this initiative, as well as Atmel have all done an amazing job at demystifying embedded electronics and fuelling the maker revolution, a superb demonstration of how a great strategy can change the world.

If I have any facts (and I said facts, not opinion) wrong I would welcome being corrected – but please as a reminder, I will ignore any comments relating to Atmel is better than PIC is better than MPS430 etc…that was not the goal of this article.

Please leave your comments on the article